“If you’re over 60 you can’t know anything about computers, social networking, app development, and such.” In 1990 there might have been some truth to that claim, today it’s a fiction. Age is no longer a measure of technological savvy.

To the contrary, many folks in their 60s, 70s, and above are the people who invented this stuff—who built the communities of users, who developed the hardware, who invented the platforms and coded the software—in reality, without those people, we’d be storing files in metal cabinets, going to the library to look at the encyclopedia, and talking on a phone that’s plugged into the wall.

Take, for example, Fred Showker (his post is what got me thinking on this). He retired in 2017 from his post as the editor and publisher of the “User Group News Network” (UGNN) and offered a summary of some of his history with the computer world.

Fred is just one of tens of thousands of professionals who, in the last thirty-plus years, have devoted much of their lives and resources to the world of personal computers—the hardware, the software, and the logistics of making it happen for you and me.

They used C++ so you could use Swift. They developed hypertext so you could achieve responsive. They built the 24lb Osborne 1 (the first “portable” computer) so you could wear one 10,000 times more powerful on your wrist.

Don’t get me wrong, I don’t claim one generation is better than the next. I’m simply pointing out that the idea that people of a certain age know less about computing is a tired, outdated concept. The reality is, that 70-something that you’re passing in hall every day may have had a hand in inventing the very things you deem most cutting edge. And that grandmother playing with the child across the way may spend this afternoon adding a social media plug-in to the back end of her client’s website.

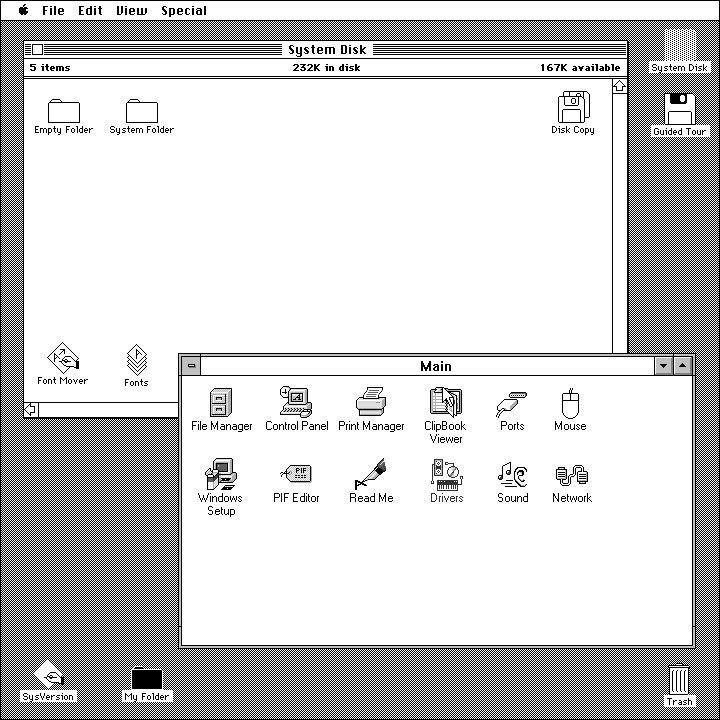

The illustration is an amalgam of windows and mac operating systems.

Posted in MAY 2019 / Chuck Green is the principal of Logic Arts, a design and marketing firm, a contributor to numerous magazines and websites, and the author of books published by Random House, Peachpit Press, and Rockport Publishers. All rights reserved. Copyright 2007-2019 Chuck Green/Logic Arts Corporation. Contact.

Chuck . . .

Great image. It brought out a lot of memories and emotions about in regards to what you are speaking about. The work completed on a Mac SE in 1988 with two floppy drives and 1 MB of RAM.

Unfortunately, there are many people in this industry who don’t give a care about someone of age, a person with wisdom, where they came from, what they have done, and most of all what they know about this business.

Employers and clients alike are looking for and concentrating on youngsters with the college degree level of skills and bypassing years of experience that comes with the age-challenged person. It’s a shame.

Nevertheless, thank you for the trip down memory lane.

John